A few weeks ago, I wrote about the road to AI-native running through integration. The argument was simple: the AI model isn’t the bottleneck anymore. The plumbing is.

The response from the field confirmed something I’ve been seeing in every deal conversation we have. The question isn’t “should we invest in AI?” anymore. It’s “we’ve invested in Agentforce and ServiceNow AI and Copilot and three other things — why isn’t any of it working the way we expected?”

I think the answer is that most companies are optimizing the wrong layer.

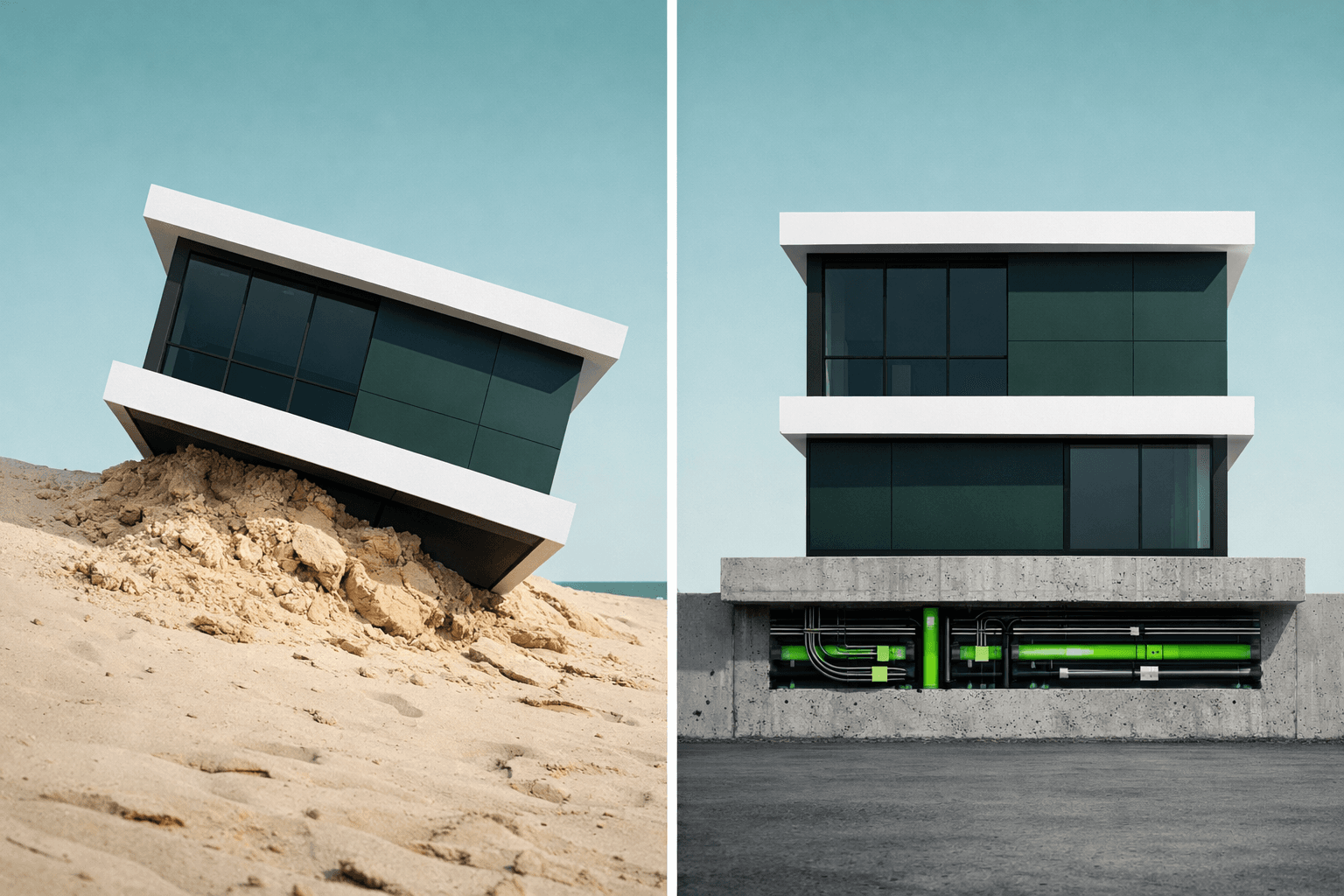

Everybody’s Arguing About the Roof While the Foundation Is Missing

Here’s a conversation I hear constantly: “Should we use Tableau or Power BI? Should we add Agentforce or build a custom AI agent? Should we go with Claude or GPT for our internal tools? What about Gemini? I heard they made some great updates recently!”

These are all roof-level decisions. They’re about how you consume data — how you look at it, interact with it, ask questions about it. And they matter. But they don’t matter first.

What matters first is whether the data is accessible at all.

Let’s use a real example that I’ve seen countless times. A company is running separate ERPs across multiple business units. This typically comes about by way of acquisition or because of BU-specific niche ERP needs. Each ERP holds a piece of the truth. The institutional knowledge about how these systems relate lives in the heads of maybe four people.

There’s often some confusion about how to handle this and most folks look directly into dashboards. This makes sense on the surface. They need visibility across the business. Products like Tableau are purpose-built for that.

But here’s what actually needs to happen before a single chart gets built: the data from three ERPs needs to be normalized into a single, accessible layer. System APIs unlocking each ERP. Process APIs normalizing the data into a canonical model that unifies the overall business process into something meaningful. A unified foundation that makes the data available to anything that wants to consume it.

Once that layer exists, Tableau is one consumer. It gets data shaped for visualization — structured datasets, aggregations, time-series formatting. That’s what Tableau is great at.

But so is an LLM. Give Claude or GPT access to the same data layer, shaped for reasoning instead of charting, and suddenly you have a conversational analyst that can answer the questions you didn’t think to put on a dashboard. “What’s our inventory position on this part across all three sites?” “Which supplier has the longest lead time variance this quarter?” “Show me every order where margin dropped below 15% and tell me why.”

Tableau answers the questions you already know to ask. An LLM answers the ones you don’t.

And both of them — the deterministic analytics and the conversational AI — are just consumers of the same foundation. You build the integration once. You get unlimited ways to use the data.

This reframe matters because most companies are making multi-year, multi-million-dollar decisions at the Experience layer — picking Tableau vs. Power BI, Agentforce vs. custom agents, Copilot vs. Claude — without building the foundation that makes any of them actually work. They’re choosing the roof before pouring the concrete.

Why This Is an SMB Superpower

Enterprise companies can afford to build separate data pipelines for each consumption pattern. They’ve got middleware teams and seven or eight figure integration budgets. They’ll brute-force it. This is a bad idea, but they’ll make it work by throwing resources at it.

SMBs can’t do that. And that’s actually the advantage.

When you’re a 50-person company with eight core systems and a lean IT team, you can’t afford to build a Tableau pipeline AND an AI pipeline AND a mobile pipeline. But you can afford to build one integration layer that serves all three.

The economics of API-led connectivity favor SMBs. One investment, compounding returns. Every new consumer you add to the data layer is incremental — not a new project. Your first integration project delivers a Tableau dashboard. Your second “project” is just a new Experience API that gives an LLM the same data. The heavy lifting was already done.

MuleSoft builds the foundation that makes every technology investment you make from now on — analytics, AI, mobile, portals — work on day one instead of year two.

What to Do About It

Stop evaluating AI and analytics tools in isolation. The tool doesn’t matter if the data isn’t accessible. Start with the data layer, then let the consumption pattern drive itself.

Ask the foundation question first. Before any new technology initiative, ask: “Can this tool access the data it needs through an API, or are we going to build another point-to-point integration that only serves this one use case?” If the answer is point-to-point, you’re building a roof on sand.

Think in consumers, not projects. Every integration project should be designed to serve multiple consumers from day one. If you’re building a Salesforce-to-ERP integration, build it as a reusable API — not a Salesforce-specific connector. Tomorrow’s AI agent will thank you.

Move now. The companies building their data foundation today will be the ones deploying AI agents, conversational analytics, and tools that don’t exist yet — on top of infrastructure that’s already paid for. The companies waiting to “see how AI plays out” will be starting from scratch every time.

The Durable Asset

Two years from now, nobody will care which BI tool you picked in 2026. They won’t care which LLM you chose either. Models will keep getting better. Dashboards will keep getting prettier. The consumption layer will keep evolving.

But the company that built a connected, API-accessible data layer across their core systems? That company can plug into whatever comes next — without rebuilding the foundation.

The data layer is the strategy. Everything else is a feature.